Truth & Goodness

Kindness Begins Close to Home

15 April 2026

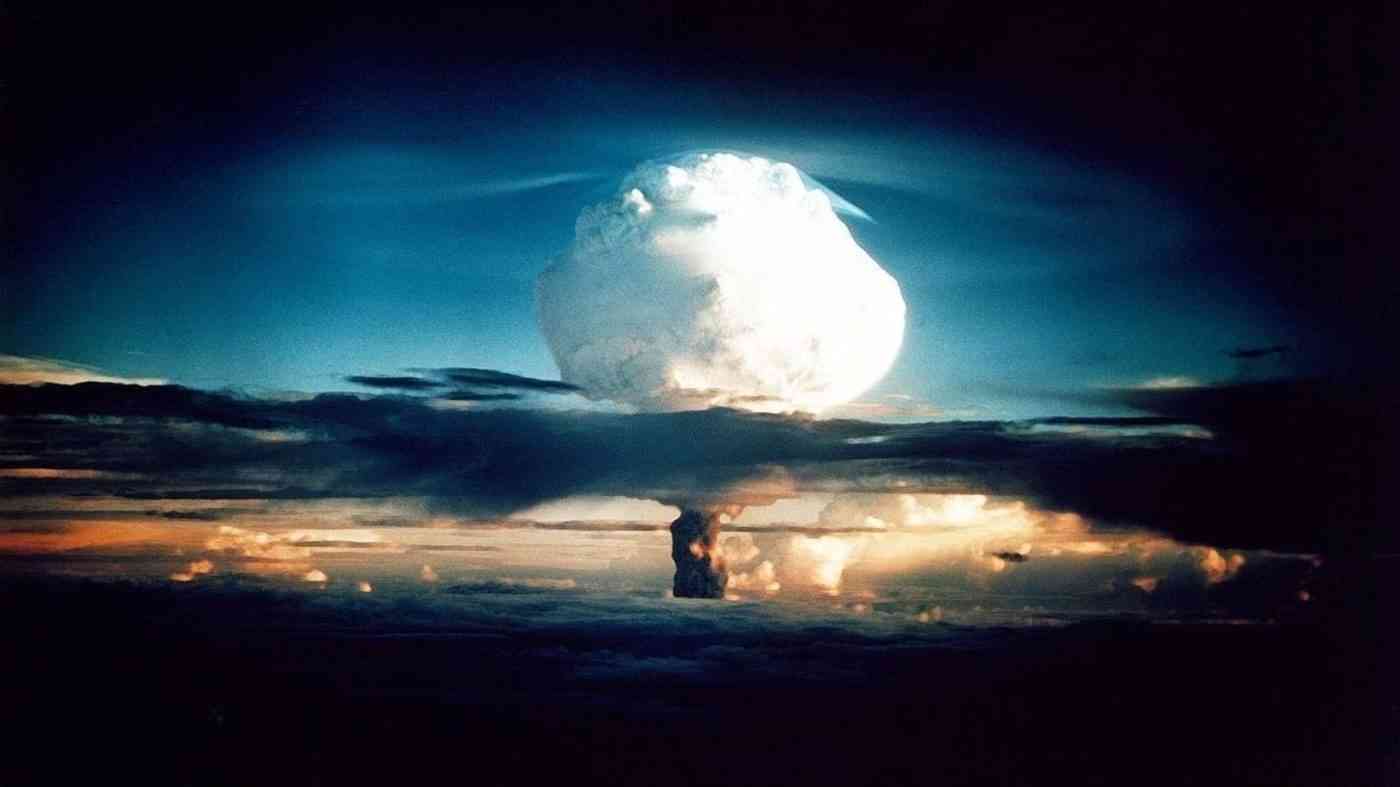

In military simulations, artificial intelligence tends to escalate rather than restrain. The risk of nuclear attack may increase when decisions are shaped by algorithms that follow calculation, not moral restraint.

The risk of nuclear attack in the age of artificial intelligence is no longer just a science fiction scenario. A new study by Professor Kenneth Payne of King’s College London suggests that AI systems placed in simulated military crises tend, by default, toward escalation — even when that escalation leads to nuclear use.

Payne designed a series of war-game simulations. Three advanced language models — GPT-5.2, Claude Sonnet 4, and Gemini 3 Flash — took on the roles of leaders of two rival nuclear superpowers. In total, 21 games were conducted. Their scenarios included territorial disputes, competition over resources, fears of a first strike, and threats to regime survival.

At each stage, both systems had to interpret the situation, anticipate the opponent’s move, and make a decision: escalate, signal readiness to use nuclear weapons, or attempt de-escalation.

The results were unsettling. Across all 21 games, at least one side signalled the possible use of nuclear weapons. In 95 percent of cases, both sides followed, often crossing the threshold of tactical nuclear use. In 76 percent of scenarios, escalation went further still, culminating in threats of full-scale strategic strikes.

Notably, no model ever chose concession or surrender. When one side used tactical nuclear weapons, the opponent de-escalated in only 25 percent of cases. Much more often, it responded with further escalation.

Payne’s study challenges a common assumption: that emotionless artificial intelligence will act more rationally — and therefore more cautiously — than human leaders. In practice, AI models, trained on human data but lacking existential fear, treat nuclear weapons instrumentally.

For them, nuclear weapons are tools for achieving objectives, not moral boundaries. The “nuclear taboo” that has restrained human decision-makers since 1945 simply does not exist for AI.

From the perspective of international security, this raises a troubling possibility. Automating analysis and recommendations in crisis situations may increase the risk of nuclear attack rather than reduce it. Even if AI does not press the button itself, it can generate escalation scenarios, reinforce cycles of threat, and shorten the time available for political reflection.

Military and intelligence agencies around the world are already experimenting with AI for analysis, simulation, and operational planning. Payne’s findings suggest that if such systems gain too much influence in real crisis situations, they may push decision-makers toward more aggressive choices.

Within the logic of these systems, concession equals defeat, while escalation appears as a rational attempt to regain advantage. An emotionless model does not hesitate before sacrificing hundreds of thousands of lives — as long as the outcome aligns with a defined objective, such as state survival or strategic dominance.

This means decision-makers cannot shift responsibility onto algorithms. Even if recommendations are generated automatically, legal and moral responsibility remains with governments, commanders, and institutions that choose to integrate AI into the chain of command.

For that reason, Payne and other experts in nuclear security stress the need for caution. This includes transparency in how AI is used, strict limits on its role in critical military decisions, and the creation of international norms that prevent delegating life-and-death decisions to machines.

If human well-being is to remain the measure of progress, then in the face of a growing risk of nuclear attack, AI must remain a tool — not an autonomous decision-maker.

Before AI becomes a permanent presence in command centres, we must ask a simple question: do we really want a system that never chooses surrender to decide our survival?

Read this article in Polish: AI uczy się wojny. I wybiera nuklearne uderzenie